Facial recognition and the end of privacy – with Kashmir Hill

By Mark Hurst • November 2, 2023

Imagine that you have a digital device that takes a photo of every person you come in contact with: someone sitting next to you on a plane, a couple at the next table in a restaurant, shoppers in your aisle of the grocery store, strangers you pass on the sidewalk. Now imagine that the device can identify each individual you see, displaying personal information about them next to their image.

This is essentially the idea behind a facial recognition app from a company called Clearview AI: it’s a real-time search engine for faces. With an input of any photo containing faces, or a live video feed showing a face, the Clearview app can identify who’s in the image – from any angle and in almost any lighting condition – and display their name, links to their social media accounts, and a series of additional photos of them on Instagram, Facebook, Snap, Flickr, or other websites. It can also identify other people in those photos: friends, dates, family members, coworkers.

Right now the Clearview app is used mainly by police departments, the FBI, and Homeland Security, though the company would no doubt prefer to make this available as a consumer product. The privacy implications are, frankly, terrifying: if this app becomes widespread, we’ll lose any last shred of anonymity we still have in public.

This is already starting to happen. Facial recognition, after all, isn’t available only from Clearview. Madison Square Garden, here in New York City, conducts a face scan on every visitor to its venues (not just MSG but also Radio City Music Hall, where the Rockettes perform). The owner of MSG has configured his system to flag people he doesn’t like, such as anyone who works for any of the law firms currently suing his company. Once identified, the visitor is swiftly escorted off the premises. (This actually happened last December in a highly publicized incident.)

There is not much standing in the way of Clearview, or some other company, eventually making real-time facial recognition available to anyone with a smartphone. The US still has no federal privacy law. Given the political deadlock in Congress, and the immense sums spent by the tech industry on lobbying, there’s not much hope for privacy legislation any time soon. Meantime the tech industry continues its growth-at-any-cost spread into every area of our lives.

To paraphrase an old saying: if you’re not freaked out, you’re not paying attention.

A new book about facial rec

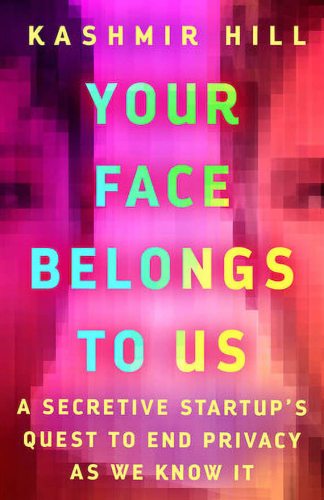

On Techtonic this week I spoke with New York Times tech reporter Kashmir Hill about her new book Your Face Belongs to Us: A Secretive Startup’s Quest to End Privacy as We Know It.

This interview is one to listen to, and the book is one to read.

• Episode links & listener comments

The book offers a history of facial recognition and a profile of Clearview AI and its founders. The tech has really taken shape in the past decade or so: the proliferation of photos on social media platforms, scraped and analyzed by new AI algorithms, set the stage for Clearview AI to put together its “search engine for faces.” Notably, Hill mentions, both Facebook and Google claim to have built similar facerec features, but decided not to launch them due to possible PR blowback. Clearview took the ethical leap (or perhaps the unethical leap) that even the sludge factories of nothern California wouldn’t touch. And now we face a witches’ brew of privacy problems.

As Kashmir Hill pointed out in our interview, facial rec can open up new kinds of discrimination. Remember that being identified by a facial scan allows the watchers to then look up everything else in your online dossier. In the future, people might be barred from theaters, restaurants, stores, and more because of their politics, religion, economic or educational status, or who their friends are. (Remember: anyone who has ever appeared in a photo with you can also be identified.) Or, as in MSG’s case, the owner might simply not like your employer. Whatever the reason, once you’re forced to leave the premises, there’s no recourse.

Even in Clearview’s case, there’s not much you can do to remove yourself from its face database. Hill’s book tells the story of a German grad student who drew on German privacy law to demand that Clearview remove his data from their system. Clearview responded, essentially, that that was impossible: even if they removed his face today, their web scraper would just pick up his face again on its next scraping expedition. Paradoxically, the only thing the student could do to get Clearview to remove any matching photos was to upload a photo that they could filter against. In other words, to keep his face out of Clearview, he’d have to upload his face to Clearview.

Once this technology is adopted throughout society, there will be no escape.

Get Mark Hurst’s weekly writings in email: Subscribe. (Or join Creative Good.)

Sign up for this newsletter.

One bright spot in the privacy landscape is the state of Illinois, which passed the Biometric Information Privacy Act, or BIPA, in 2008. It’s against the law to collect biometric data (face, voice, fingerprint, gait, etc.) on Illinois citizens without their consent. A few other states have made inroads on privacy legislation, too. So perhaps the next step is to take the fight to the states.

The broader conclusion to draw from the Illinois legislation is that, contrary to what we constantly hear from Silicon Valley, the law is not too slow, or outdated, to keep up with tech. There’s plenty of evidence that the law can effectively put guardrails around facial recognition, if enough lawmakers refuse the lobbyists and their dollars. (In our interview, Hill mentions The Listeners, a book by Brian Hochman describing how Congress effectively responded to wiretaps – which is why surveillance cameras historically have not recorded audio. Then came Amazon’s surveillance doorbell, Ring, which is a different story.)

While we await better legislation, another option is to wear protection. Hill’s book mentions Reflectacles, privacy eyewear – from past Techtonic guest Scott Urban – that defeats infrared facial scanners. Soon enough we may need Reflectacles and a lot more. (I’m ready for Scott to develop a full-body suit of infrared-resistant privacy armor.)

In the end, whether it’s Reflectacles or a state law or some action that miraculously emerges from Congress, something has to change. While we wait, we’re keeping a lookout in the Creative Good community, discussing both the threats and hopeful developments in tech. Please join us.

-mark

Get Mark Hurst’s weekly writings in email: Subscribe. (Or join Creative Good.)

Sign up for this newsletter.

Mark Hurst, founder, Creative Good – see our services or join as a member

Email: mark@creativegood.com

Listen to my podcast/radio show: techtonic.fm

Subscribe to my email newsletter

Sign up for my to-do list with privacy built in, Good Todo

On Mastodon: @markhurst@mastodon.social

- – -